Why Terminology Is the Real AI Differentiator in 2026

The winners in healthcare AI won't have the best models — they'll have the cleanest data. Here's why terminology management is the competitive edge.

Wolters Kluwer's Anne Donovan said it plainly: "The winners in 2026 will be those who invest in data quality and terminology management. Leveraging FHIR-based terminology services will become essential for normalizing clinical data, maintaining accurate value sets, and ensuring consistent mapping across systems."

She's talking about the gap between having healthcare data and having usable healthcare data. And in 2026, that gap is where most AI projects stall.

The Model Isn't the Problem

Healthcare AI has a narrative problem. The industry obsesses over model benchmarks — which LLM scores highest on MedQA, which embedding model best captures clinical semantics. But the teams actually shipping healthcare AI products will tell you: the model is rarely what breaks.

What breaks is the data underneath.

A prior authorization system receives an ICD-10 code — say, E11.9 for type 2 diabetes. Simple enough. But the EHR also sends NDC codes for the patient's medications, LOINC codes for their lab results, and NPI identifiers for their providers. Each code comes from a different authority, updates on a different schedule, and maps to other code systems in non-obvious ways.

The model doesn't struggle with reasoning about diabetes management. It struggles because the medication code it received maps to three different RxNorm concepts depending on the packaging, and it has no way to resolve which one is correct.

Why 2026 Is the Inflection Point

Three forces are converging that make terminology management suddenly urgent:

CMS-0057-F is now in effect. As of January 2026, payers must meet prior authorization turnaround times and report decision metrics. The full API compliance deadline — requiring FHIR-based Patient Access, Provider Access, and Prior Authorization APIs — lands January 1, 2027. Every payer and provider in the country is building or buying FHIR infrastructure right now.

TEFCA hit scale. The trusted exchange network grew from 10 million to nearly 500 million records in a single year, with 12,130 organizations and 72,000+ connections live. More data flowing means more normalization needed.

AI companies entered healthcare. OpenAI selected b.well to power health data connectivity in ChatGPT. CMS's Digital Health Ecosystem has Amazon, Anthropic, Apple, Google, and OpenAI all committed to patient-centric data exchange. These companies need clean, normalized clinical data — not raw code dumps.

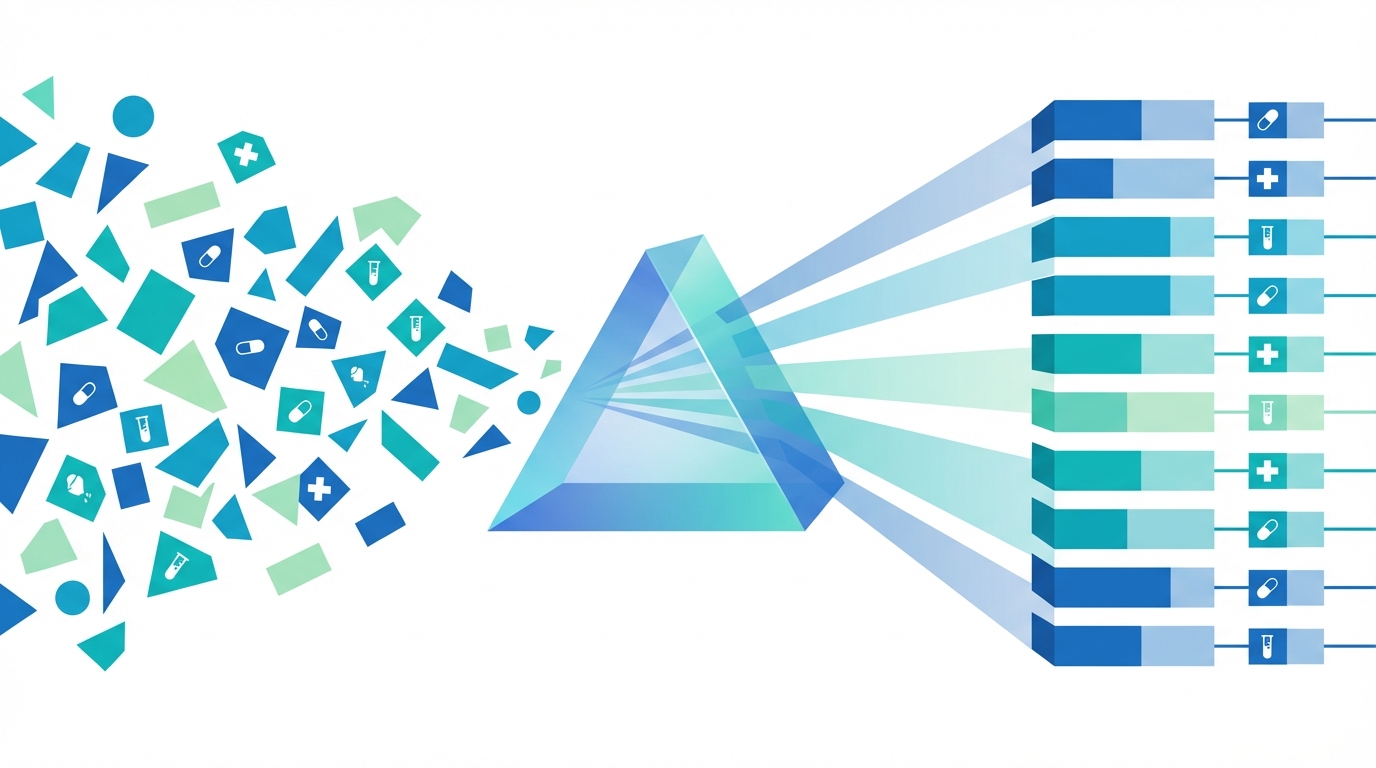

What "Data Quality" Actually Means in Practice

Donovan's quote mentions three specific capabilities. Each one maps to a concrete technical problem:

Normalizing clinical data means resolving ambiguity. The same drug can appear as an NDC (package-level), an RxNorm concept (ingredient-level), or a brand name (marketing-level). A system that treats these as interchangeable will produce incorrect results. Normalization means knowing that NDC 0071-0156-23 is atorvastatin calcium 10mg, which maps to RxCUI 617314, which has 3 equivalent SNOMED concepts.

Maintaining accurate value sets means keeping up with change. ICD-10-CM updates annually. RxNorm updates weekly. NDC codes appear and disappear as products enter and leave the market. An AI system trained on last year's codes will silently produce wrong answers when codes change — and in healthcare, wrong answers have consequences.

Ensuring consistent mapping across systems means cross-walking between terminologies reliably. When a FHIR Bundle contains an ICD-10 diagnosis code that needs a SNOMED equivalent for international exchange, that mapping must be deterministic and current. When an NDC needs to be enriched with RxNorm drug interaction data, the linkage has to be right.

None of this is glamorous work. But it's the difference between an AI system that demos well and one that works in production.

The Build-vs-Buy Calculus

Every healthcare AI team faces this question: do we build our own terminology layer, or use an existing service?

The build option looks straightforward at first. Download the FDA's NDC directory, NLM's RxNorm files, CMS's ICD-10 tables. Parse them, load them into a database, expose an internal API. A competent engineer can prototype this in a week.

Then reality sets in:

- The NDC directory has 300K+ records that change daily. Your pipeline needs to detect additions, deactivations, and corrections.

- RxNorm's monthly release is a set of relational files with 15+ tables and non-trivial join logic to resolve concepts.

- ICD-10-CM→SNOMED mappings come from SNOMED's Extended Map, which requires parsing the RF2 release format — a 1.5GB ZIP with its own data model.

- Cross-walks between systems (NDC→RxNorm→SNOMED, ICD-10→SNOMED) require maintaining transitive relationships that break when any upstream source changes.

The prototype that took a week to build takes a team to maintain. And every hour spent maintaining data pipelines is an hour not spent on the AI application that's supposed to be your competitive advantage.

What This Means for Healthcare AI Teams

Donovan's observation isn't aspirational — it's descriptive. The teams winning in healthcare AI right now share a common trait: they treated data quality as infrastructure, not an afterthought.

A few practical implications:

Invest in your terminology layer early. It's tempting to hardcode a few lookups and move on. But terminology debt compounds. Every new feature that touches clinical codes inherits whatever shortcuts you took at the start.

Demand freshness guarantees. Ask your data source — whether internal or external — how often it updates, what its lag is from authoritative sources, and how it handles corrections. "We loaded it last quarter" is not an acceptable answer for production healthcare AI.

Test at the boundaries. Unit tests that mock terminology lookups will pass even when the real data breaks. Integration tests against real, current data catch the failures that actually hit your users.

Separate terminology from application logic. Your AI model shouldn't know or care how NDC-to-RxNorm resolution works. It should call a service and get a clean answer. This separation makes your application more testable, more maintainable, and more resilient to changes in the underlying data.

The Infrastructure Opportunity

The healthcare AI market is projected to reach $45 billion by 2026. But the infrastructure layer — the terminology services, normalization APIs, and data quality tools that make AI applications actually work — is still emerging.

This is what FHIRfly is built for. We maintain daily-updated APIs for NDC, RxNorm, ICD-10, LOINC, NPI, CVX, SNOMED, and FDA drug labels. We handle the parsing, normalization, cross-walks, and freshness so that AI teams can focus on their actual product.

The winners in 2026 will invest in data quality. We're here to make that investment easy.

Written by The FHIRfly Team — a collective of healthcare data experts, AI specialists, and industry veterans building better clinical coding APIs.