The 98% Problem: What Healthcare AI Teams Actually Build Before Writing a Single Prompt

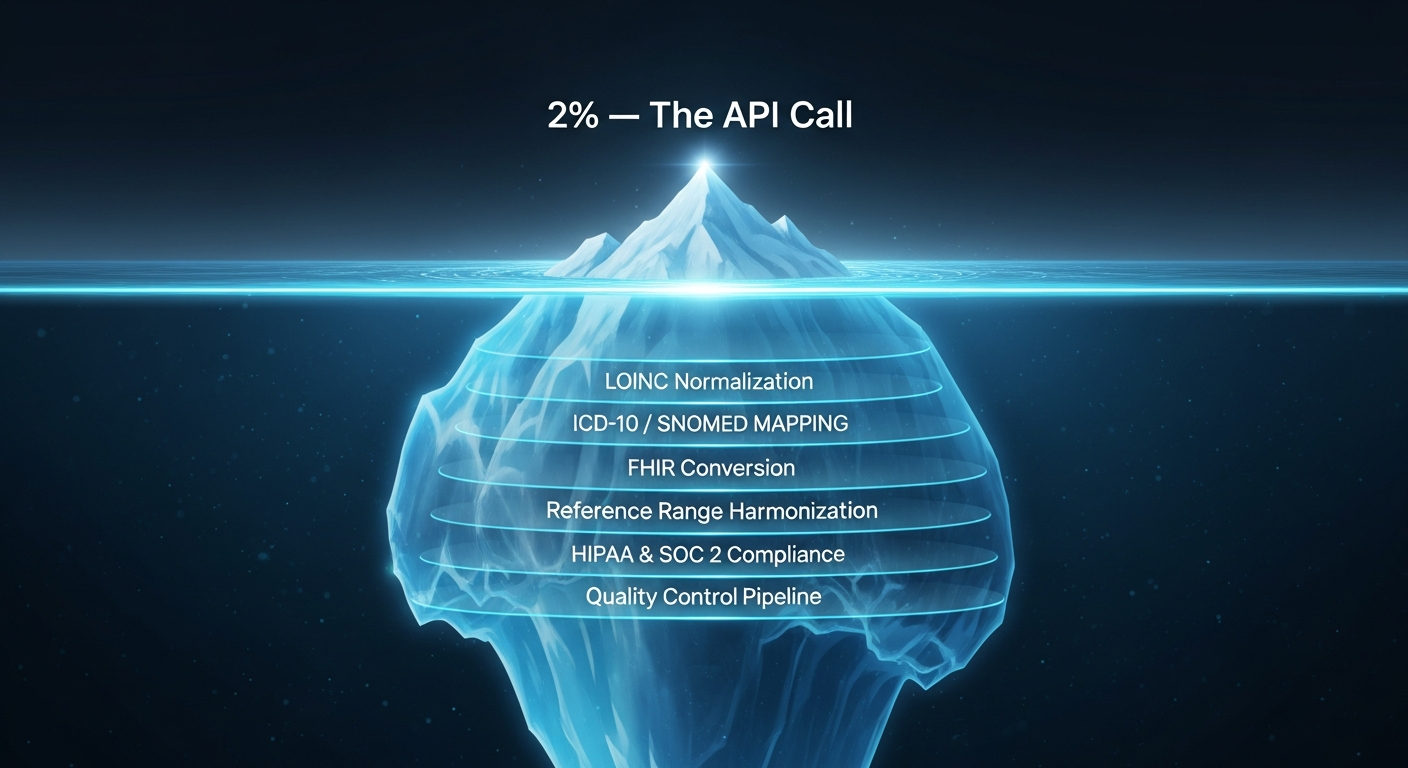

The API call is 2% of the work. The other 98% — LOINC normalization, FHIR conversion, compliance — is where healthcare AI teams actually spend their time.

Every week, another engineering team asks the same question: "Can we just plug OpenAI into our clinical workflow?"

The short answer is yes. The longer answer is that the API call is about 2% of the work.

BloodGPT published a detailed engineering breakdown of what it actually takes to build a production healthcare AI system on top of a large language model. Their analysis is thorough and worth reading in full — they've done the hard work of mapping out every component. The conclusion is striking: teams routinely underestimate the surrounding infrastructure by 10x.

This post unpacks why that happens, what the infrastructure actually looks like, and how terminology APIs can eliminate months of work from the critical path.

The Real Cost Breakdown

BloodGPT identifies ten major engineering components required for a production healthcare AI system. The LLM API call is one of them. The other nine look like this:

| Component | Typical Timeline |

|---|---|

| PDF Parsing & Data Extraction | 3–6 months |

| LOINC Code Mapping | 2–4 months |

| FHIR R4 Conversion | 3–6 months |

| Reference Range Harmonization | 2–3 months |

| Data Storage & Retrieval | 1–2 months |

| User Management & Auth | 2–3 months |

| Visualization | 2–4 months |

| Localization | 2–4 months |

| QA & Testing | 3–6 months |

| Compliance (HIPAA / SOC 2 / GDPR) | 6–12 months |

The bolded rows are terminology-specific. LOINC mapping alone — normalizing lab result names to standard codes — is estimated at 2–4 months of engineering. FHIR conversion adds another 3–6 months. These are conservative estimates for a team that already understands the healthcare data landscape.

Total estimated cost: hundreds of thousands to well over a million dollars, spread across 16–28 months of development.

Why Terminology Is the Hard Part

Consider a seemingly simple task: normalizing a lab result. A patient's HbA1c result arrives from a lab. Depending on the source system, it could be labeled:

- "HbA1c"

- "Hemoglobin A1c"

- "Glycated Hemoglobin"

- "A1C"

- "Hgb A1c, Blood"

- LOINC code

4548-4

All of these refer to the same biomarker. LOINC contains over 109,000 codes, each defined by six axes (component, property, timing, system, scale, method). Accurate normalization requires fuzzy matching across naming variants, clinical context awareness, and a confidence scoring system to flag uncertain mappings for human review.

The same complexity applies to diagnoses (ICD-10-CM has 46,881 codes), clinical findings (SNOMED CT has 586,763 concepts), and medications (RxNorm has 1.9 million concepts). Each code system has its own hierarchy, versioning, and mapping conventions.

Building this from scratch means downloading bulk files from CMS, NLM, and WHO; writing parsers for each format; building a search index; maintaining update pipelines; and ensuring your local copy stays current. That's the 2–4 month estimate — for a single code system.

A Practical Alternative

Terminology APIs let you skip the build-from-scratch phase and go straight to using normalized clinical codes in your application. Here's what the workflow looks like in practice.

Normalizing a Lab Result

Instead of building a LOINC mapping engine, search for the code:

curl -s -H "x-api-key: $FHIRFLY_API_KEY" \

"https://api.fhirfly.io/v1/loinc/search?q=hemoglobin+a1c&limit=3"

The response includes normalized LOINC codes with display names, component breakdowns, and FHIR-ready coding references:

{

"results": [

{

"code": "4548-4",

"display": "Hemoglobin A1c/Hemoglobin.total in Blood",

"component": "Hemoglobin A1c",

"system": "Bld",

"fhir_coding": {

"system": "http://loinc.org",

"code": "4548-4",

"display": "Hemoglobin A1c/Hemoglobin.total in Blood"

}

}

]

}

That fhir_coding object drops directly into a FHIR Observation resource. No mapping table required.

Resolving a Diagnosis Code

When your AI system extracts a diagnosis mention from clinical text, you need to ground it to a standard code:

curl -s -H "x-api-key: $FHIRFLY_API_KEY" \

"https://api.fhirfly.io/v1/icd10/search?q=type+2+diabetes&limit=3"

You get back structured ICD-10-CM codes with hierarchy information, and — if the code has HCC risk adjustment mappings — those are included too:

{

"results": [

{

"code": "E11.9",

"display": "Type 2 diabetes mellitus without complications",

"category": "E11",

"chapter": "4",

"hcc": {

"assignments": [

{

"hcc_code": "37",

"hcc_label": "Diabetes without Complication",

"model": "V28",

"coefficient": 0.097

}

]

}

}

]

}

Cross-Referencing Medications

For medication reconciliation or drug interaction checks, look up an NDC code and get back the full drug profile:

curl -s -H "x-api-key: $FHIRFLY_API_KEY" \

"https://api.fhirfly.io/v1/ndc/00002-1433-80?shape=standard"

The response includes proprietary name, active ingredients, dosage form, route, manufacturer, and RxNorm mappings — all structured for programmatic use.

Using the SDK

For TypeScript/Node.js applications, the @fhirfly-io/terminology SDK wraps these APIs with full type safety:

import { FhirflyClient } from '@fhirfly-io/terminology';

const client = new FhirflyClient({ apiKey: process.env.FHIRFLY_API_KEY });

// Normalize a lab code

const loinc = await client.loinc.search({ q: 'hemoglobin a1c' });

// Resolve a diagnosis

const icd = await client.icd10.lookup('E11.9', { shape: 'standard' });

// Look up HCC risk adjustment

const hcc = await client.hcc.lookup('E11.9');

// Batch lookup — up to 100 codes at once

const batch = await client.ndc.lookupMany(

['00002-1433-80', '00074-3799-13', '00078-0357-15'],

{ shape: 'standard' }

);

Each method returns typed responses. Your IDE autocompletes the fields. No guessing at response shapes.

For Exploration and Investigation

Terminology APIs aren't just for production pipelines. During the exploratory phase of a healthcare AI project — when you're still understanding the data landscape — having instant access to clinical codes accelerates research:

- Data profiling: Validate codes appearing in your source data. Are they current? Deprecated? Mapped correctly?

- Schema design: Understand the structure of a code system before designing your data model.

- Prompt engineering: Ground your LLM prompts in real terminology. Instead of asking a model to "classify this condition," provide it with the actual ICD-10 candidate codes and their descriptions.

- RAG grounding: Use structured terminology data as retrieval context. A Wolters Kluwer analysis found that combining LLMs with curated terminology maps significantly improves both speed and accuracy compared to using an LLM alone.

- Compliance research: Look up codes referenced in CMS rules, payer policies, or clinical guidelines to understand their exact scope.

This is especially relevant for teams evaluating clinical NLP pipelines. Entity extraction tools (from John Snow Labs, Rhapsody, or custom models) map extracted entities to SNOMED CT, ICD-10, LOINC, and RxNorm. Having a terminology API alongside your NLP pipeline lets you validate those mappings in real time, catch edge cases, and build feedback loops.

Fitting Into the Broader Stack

Terminology APIs don't replace the other components in BloodGPT's breakdown — you still need PDF parsing, compliance architecture, QA pipelines, and everything else. But they eliminate the terminology-specific work (estimated at 5–10 months combined) from your critical path.

The landscape of healthcare AI infrastructure is growing rapidly. OpenAI, Google, and Anthropic all launched dedicated healthcare products in early 2026. Platforms like Metriport handle FHIR data aggregation. eClinicalWorks launched an AI API Workbench for EHR customization. Each of these tools solves a different layer of the stack.

Terminology sits underneath all of them. Whether you're building a RAG system, a clinical NLP pipeline, or an AI agent that interacts with FHIR servers, you need accurate code lookups. A 2026 industry analysis put it directly: the winners this year will be those who invest in data quality and terminology management.

What's Available

FHIRfly's terminology API currently covers 16 clinical data sets, updated daily:

| Data Set | Record Count | Use Case |

|---|---|---|

| NPI | 9.4M providers | Provider lookup and validation |

| RxNorm | 1.9M concepts | Drug concept normalization |

| SNOMED CT | 586K concepts | Clinical findings and procedures |

| NDC | 377K packages | Drug product identification |

| FDA Drug Labels | 271K labels | Drug safety and labeling |

| LOINC | 109K codes | Lab and observation normalization |

| ICD-10-PCS | 79K codes | Procedure classification |

| ICD-10-CM | 46K codes | Diagnosis classification |

| Connectivity | 437K endpoints | FHIR endpoint discovery |

| HCC Crosswalk | 28K mappings | Risk adjustment (CMS models V21–V28) |

| OPCS-4 | 1,665 codes | UK procedure classification |

| And more | — | Vaccines, claims edits, fee schedules, coverage determinations |

Several data types (including ICD-10 and LOINC) include fhir_coding references for direct integration with FHIR resources — with more being added. Batch endpoints handle up to 100 codes per request. Search endpoints use Atlas Search for fuzzy matching across display names and descriptions.

Key Takeaways

- The LLM API call is roughly 2% of a production healthcare AI system. The other 98% is infrastructure — and terminology is one of the largest pieces.

- Building LOINC normalization, ICD-10 mapping, and FHIR conversion from scratch takes an estimated 5–10 months of engineering.

- Terminology APIs eliminate that work by providing normalized, FHIR-ready clinical codes via REST endpoints and typed SDKs.

- These APIs are useful throughout the development lifecycle — from early exploration and data profiling through production normalization pipelines.

Next Steps

- Explore the API: Browse the documentation and try a few lookups — no credit card required.

- Read BloodGPT's analysis: Their full build-vs-buy breakdown is an excellent reference for healthcare AI infrastructure planning. Their Claude-specific guide covers Anthropic's healthcare connectors.

- Check the SDK: The @fhirfly-io/terminology package provides typed TypeScript access to all endpoints.

- Try the MCP server: If you're building AI agents, the @fhirfly-io/mcp-server exposes terminology tools directly to Claude, ChatGPT, and other LLM frameworks.

Written by The FHIRfly Team — a collective of healthcare data experts, AI specialists, and industry veterans building better clinical coding APIs.